Most data work does not fail because one person ran too slowly.

It fails because the track is too long.

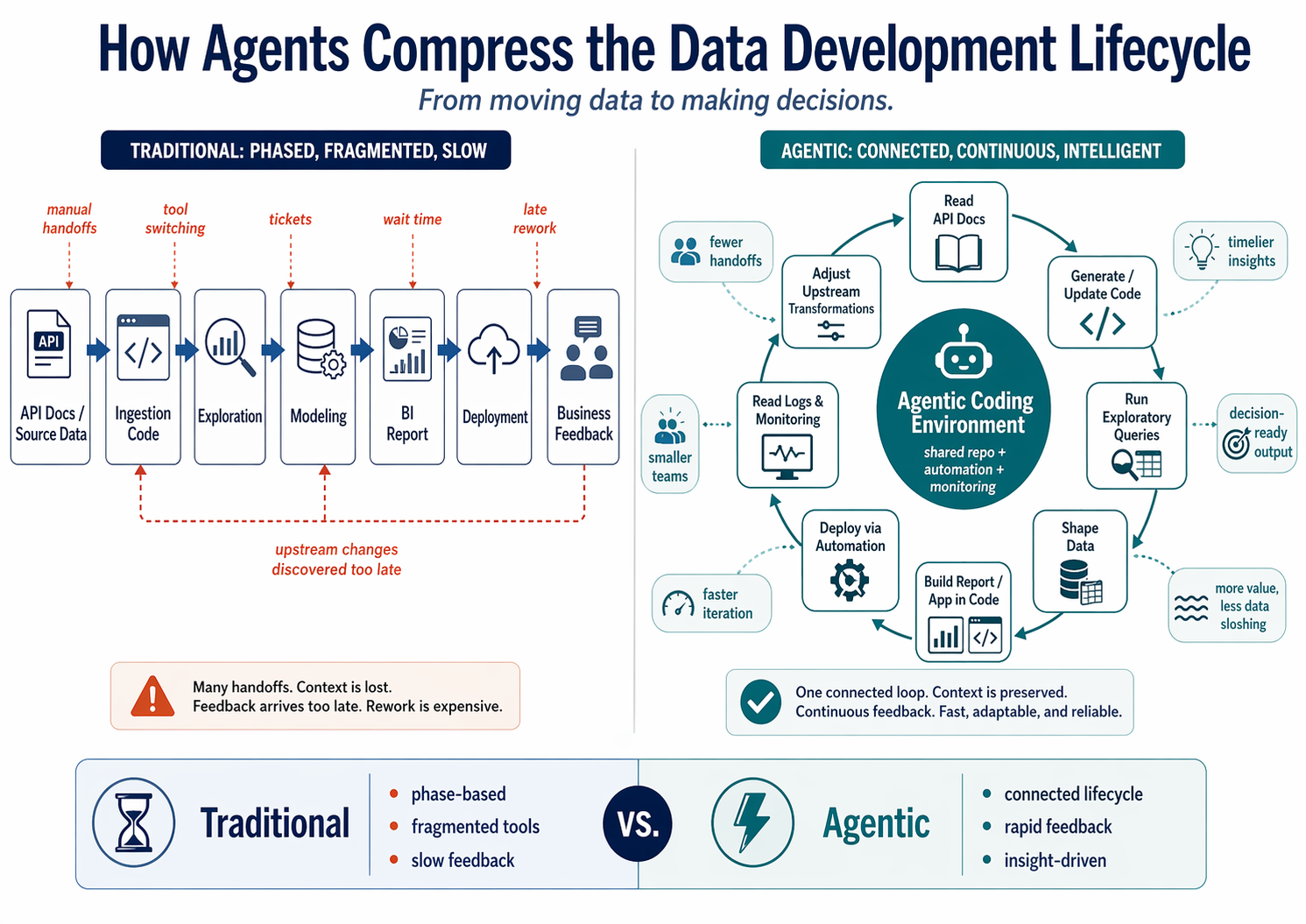

A question starts with the business, moves through engineering, passes into analytics, lands in a report, and eventually comes back as feedback. Every handoff adds distance. Every distance creates a chance to lose context.

For years, we tried to make each runner faster. Better ETL tools. Better BI tools. Better deployment systems. Better project management.

Useful, but incomplete.

Agents change something more fundamental. They do not just make the runners faster. They shorten the track.

When an agent can move between code, Git, deployment systems, logs, APIs, databases, notebooks, semantic models, and reports, the work stops behaving like a long relay. It starts behaving like a tight feedback loop.

The Relay Race Problem

A relay race is not just a bunch of people running.

It is a fragile sequence of handoffs.

One runner comes in at full speed. The next runner is already moving. The baton has to pass from one hand to another inside a narrow exchange zone, at exactly the right moment, without either runner slowing down too much or drifting out of sync.

That is harder than it looks.

The running is not the risky part. The handoff is.

That is why relay teams need so much coordination. The coach does not just say, “Run fast.” They define who starts where, when the next runner should take off, which hand receives the baton, how many steps to count, and what to do if the exchange starts to fall apart.

Speed creates fragility.

The faster everyone runs, the more precise the handoff has to be. One mistimed step, one unclear signal, one runner leaving too early, and the baton hits the track.

Current data development has the same problem.

A stakeholder starts with a question. That question gets handed to a data engineer. The engineer pulls the source data, writes the ingestion logic, fixes the pipeline, or reshapes the upstream table. Then the baton moves to an analyst, who builds the model, writes the measures, creates the dashboard, and tries to turn the data into something the business can actually use.

Then the baton moves again.

The stakeholder opens the dashboard, clicks around, and decides whether it answers the question.

If it does, great. The race ends.

If it does not, the team starts another race.

New clarification. New ticket. New query. New transformation. New validation pass. New dashboard review.

Every new race has overhead.

And just like in a relay, the baton is not just an object. In data work, the baton is context.

It is the business meaning. The assumptions. The edge cases. The schema choices. The validation rules. The reason one metric matters and another does not. The detail someone mentioned in a meeting that never made it into the ticket but completely changes how the report should work.

That context is fragile.

The farther it travels, the easier it is to drop.

This is why data teams end up needing so much process around the work. Project managers, tickets, status meetings, requirements docs, acceptance criteria, dashboard review sessions, deployment checklists, validation spreadsheets.

None of that is pointless.

It exists because the handoffs are hard.

When the course is long and the runners are spread across different tools, teams, and systems, you need more coordination just to keep the baton moving safely. The project manager becomes the coach. The ticket becomes the race plan. The status meeting becomes the exchange-zone drill.

That is not a failure of the team.

That is the cost of the operating model.

Agentic workflows change the shape of the race.

The runners are no longer spread around the full track, sprinting their own leg and hoping the next handoff lands cleanly. They are standing closer together. Close enough to pass the baton by hand. Close enough to pass it forward, backward, or sideways depending on what the work needs next.

That is what it means to shorten the track.

The handoffs do not disappear. They get easier. The baton still moves from code to deployment, from deployment to logs, from logs back to code, from output to validation, from validation back to the transformation. But it does not have to travel across the whole course every time something changes.

You do not need as much ceremony when the runners are standing next to each other. You do not need a coach managing every step of the exchange. You do not need every correction to become a new race.

The work becomes less linear.

Less request, handoff, finish, restart.

More observe, adjust, validate, repeat.

That is the real shift.

Not just faster runners.

A shorter course. Cleaner handoffs. Less coordination overhead. Tighter feedback loops.

The New Operating Layer

Application development is the cleanest place to see this new race take shape.

Take a typical AWS app deployment.

The application code lives in a repo. Git tracks the changes. GitHub Actions handles the deployment. A CloudFormation template defines the infrastructure. AWS runs the application. CloudWatch captures the logs. The database holds the state the application is supposed to read and write.

In a traditional workflow, those pieces are separate stops on the track. You write the code, update the CloudFormation template, commit the change, push it, wait for GitHub Actions to deploy it, then go check what happened. If something breaks, you open AWS, inspect the stack, find the CloudWatch logs, check the database, copy the useful error or result, return to the repo, make the fix, and push again.

That is the relay.

The code is in one place. The deployment state is in another. The infrastructure status is somewhere else. The logs are in CloudWatch. The application state is in the database. The fix is back in the repo.

None of those steps are especially hard.

But the feedback is always somewhere else.

The agent changes that by standing in the middle of the operating layer. It can edit the code, adjust the CloudFormation template, commit the change, push to GitHub, watch the deployment, inspect the AWS stack, read the CloudWatch logs, query the database through the AWS CLI, connect the failure back to the file that caused it, patch the issue, and redeploy.

Same tools.

Different geometry.

The repo, Git, GitHub Actions, CloudFormation, AWS runtime, CloudWatch logs, and database state are no longer runners scattered around the track. They are close enough for the agent to move the baton between them without a sprint.

A bad stack update can pass straight back to the CloudFormation template.

A runtime error can pass straight back to the code.

A database mismatch can pass straight back to the application logic.

A failed deployment is no longer the finish line of one race and the starting line of another. It is just the next handoff inside the same loop.

That is the new operating layer.

Not one magic platform. Not one AI trick. A shared, scriptable surface where the systems involved in delivery are close enough for an agent to move between them.

Git gives the checkpoint. GitHub Actions gives the deployment state. CloudFormation gives the infrastructure definition. AWS gives the runtime. CloudWatch gives the feedback. The database gives the application state. The repo gives the place to make the next change.

Once those pieces are connected, the race stops being a long sequence of full-speed handoffs.

It becomes a tight exchange zone.

The baton still moves.

It just does not travel as far.

Where Fabric Gets Interesting

The AWS loop is easy to see because app failures are loud. The deployment fails. The stack rolls back. The endpoint crashes. The logs show the error. The database tells you whether the app is actually doing what it was supposed to do.

Data engineering has the same relay problem, but the signals are quieter. A pipeline can run successfully and still produce the wrong answer. A notebook can complete and still duplicate rows. A dashboard can load and still hide a bad assumption.

It is also messier underneath.

Application development already fits a fairly coherent operating model — code in a repo, infrastructure in a template, deployment through Actions, runtime in AWS, logs in CloudWatch, state in the database. Different systems, but the same software delivery pattern.

Data engineering does not fit cleanly into one pattern.

The systems do not always speak the same language.

That is especially true in BI-heavy workflows. You might write ingestion logic in Python, transform data with SQL or PySpark, store the output in a Lakehouse, expose it through a Warehouse or SQL endpoint, model it in Power BI, define business logic in DAX, and then shape the final experience through a report layout that has historically been very UI-driven.

That is a lot of runners.

And they are not all built for the same race.

A Python notebook does not think like a Power BI semantic model. A Lakehouse table does not think like a DAX measure. A pipeline run does not think like a report layout. A business metric does not always map cleanly to the transformation that produced it.

This is why data work needs so much translation.

The engineer understands the source system. The analyst understands the report logic. The BI developer understands DAX and layout. The stakeholder understands what the number is supposed to mean.

The baton is not just moving between tools.

It is moving between languages, mental models, and layers of abstraction.

That is what makes the handoffs fragile.

Fabric is interesting because it starts to pull those layers into one environment. Not perfectly. Not magically. But enough to change the shape of the work.

You have notebooks, pipelines, Lakehouses, Warehouses, SQL endpoints, semantic models, reports, deployment pipelines, APIs, and increasingly, code-backed configuration for assets that used to feel locked inside the UI.

That last part matters.

Power BI has always been powerful, but it has also been deeply UI-centered. A lot of real business logic ends up hidden behind clicks: relationships, measures, visuals, formatting, filters, interactions, page layout. That works well for humans, but it is not naturally agent-friendly.

Agents need something they can read and modify.

Code changes the game.

When Power BI assets can be represented as files and configuration, the report stops being only something someone clicks around in. It becomes closer to software. The agent can inspect it, compare it, modify it, track it in Git, deploy it, and roll it back.

That turns Power BI from a finish-line artifact into another runner inside the loop.

Now the agent can move across more of the data lifecycle. It can update the Python transformation, adjust the SQL logic, run the Fabric pipeline, query the Lakehouse output, check the Warehouse table, validate row counts and schemas, inspect the semantic model, modify a DAX measure, adjust report configuration, and redeploy the asset.

That is the Fabric version of shortening the track.

Not because every tool becomes the same.

Because the agent gets a common operating layer across tools that used to require humans to translate between them.

And this matters more in data than in app development because data failures are often quiet.

An app usually fails loudly. The deployment breaks. The endpoint crashes. The logs show the exception.

Data can fail politely.

A notebook can run successfully and still duplicate rows. A pipeline can complete and still filter out the wrong records. A semantic model can refresh and still produce the wrong total. A DAX measure can calculate exactly as written and still not match the business definition. A dashboard can load beautifully and still point the business in the wrong direction.

In data work, green does not always mean good.

That is why a tighter loop matters.

The agent should not just ask, “Did it run?”

It should help ask better questions:

Did the row count change unexpectedly? Did the schema drift? Did this join multiply records? Does this measure still match the business definition? Did the report change in a way that affects interpretation? Does the output still line up with what the stakeholder meant?

That is where the real value is.

Not “AI writes SQL.”

Useful, but small.

The bigger shift is that agents can help operate across the messy middle of data work — the space between source data, transformation logic, semantic modeling, report design, and business validation.

That messy middle is where a lot of data engineering actually lives.

Fabric gives agents a better shot at working there because more of the lifecycle can be exposed through APIs, files, and code-backed definitions. The UI is still useful. People will still build, explore, and review visually. But the more the platform can be represented as code, the more the workflow becomes agent-operable.

That is the real shift.

The future of data engineering is not clicking through better UIs.

It is making the lifecycle readable, editable, deployable, and testable enough that agents can help carry the baton across the whole loop.

Python. SQL. Pipelines. Lakehouses. Warehouses. DAX. Semantic models. Reports.

Different runners.

Different languages.

Same compressed track.

Final Thoughts: Stop Optimizing the Relay

For years, most improvement in data work has been about making each runner faster.

Better pipeline tools. Better notebooks. Better BI platforms. Better deployment systems. Better cloud consoles. Better project management.

All useful.

But none of that changes the shape of the work.

You can make the engineer faster. You can make the analyst faster. You can make deployment smoother and dashboards easier to build. But if every question still has to run the full track — from stakeholder to engineer to analyst to report to feedback and back again — the system is still expensive.

The race is still too long.

Agents change the shape by shortening the distance between the pieces. That is the real unlock.

Not AI as autocomplete. Not AI as a SQL generator. Not AI as a dashboard gimmick.

AI as feedback compression.

When agents can work across Git, deployment pipelines, cloud logs, databases, Fabric APIs, notebooks, SQL endpoints, semantic models, DAX, and report definitions, the baton does not have to travel across five teams and seven tools before the next useful thing can happen.

The feedback can move while the work is still warm.

That matters because most data problems are not solved in one perfect pass. The first version is usually close, but incomplete. The metric is almost right. The table is almost shaped correctly. The dashboard almost answers the business question.

Then the real work begins.

In the old model, every “almost” starts another race.

In the agentic model, every “almost” becomes the next handoff inside the same loop.

That does not remove the need for data engineers. If anything, it makes engineering judgment more important.

Someone still has to define what good looks like. Someone still has to protect production. Someone still has to decide which checks matter. Someone still has to understand the business meaning behind the metric. Someone still has to know when a quick fix is actually a bad idea wearing a friendly mask.

The agent can keep the baton moving.

The engineer decides where the track should go.

That is the role shift.

Less time walking between tools. More time designing the loop. Less manual translation across systems. More focus on validation, architecture, and business meaning.

This is why the operating layer matters so much. The more our tools expose themselves through APIs, files, logs, CLIs, and code-backed configuration, the more agents can help with the actual lifecycle of data work — not just the text inside one notebook or one SQL file.

Of course, a shorter track still needs lanes.

Git still matters. Pull requests still matter. Environment separation still matters. Automated tests, validation checks, scoped credentials, and rollback paths matter more, not less.

When agents can move faster across more systems, the guardrails have to be clearer. The goal is not to let the agent sprint blindly through production. The goal is to let it move safely inside a loop the engineer designed.

The future of data development is not a faster relay race.

It is a shorter track. Cleaner handoffs. Less coordination overhead. And a feedback loop tight enough to learn while the work is still warm.